Digital artifacts 001

A weekly round up of the most interesting things on the internet. This week: war reshapes global power, Meta can read your mind, and near death experience as a key to understanding consciousness.

This is the first issue of Digital Artifacts, a weekly collection of the media and ideas that shaped my week. Think of these as literal artifacts from the digital world: ideas worth picking up and examining before the feed buries them again.

This week, the Iran war reshapes global power, Meta loses a major lawsuit for its mental health impact and releases a scarily accurate predictive model of the human brain the next day, and the rise of machine consciousness has some turning inward.

Artifact 01: A cartoon explanation of the Iran war, or how I learned to stop worrying and love Chinese AI propaganda

The Iran war and the closure of the Strait of Hormuz is shining a light on the fragility of global trade and energy systems. The deeper story is around the balance of power, literal power, as in the energy nations consume to keep their economies running. The US dollar runs on this power: the petrodollar system, introduced in 1971, has required nations to trade oil in US dollars in exchange for military protection. This has given the dollar longstanding status as the global reserve currency.

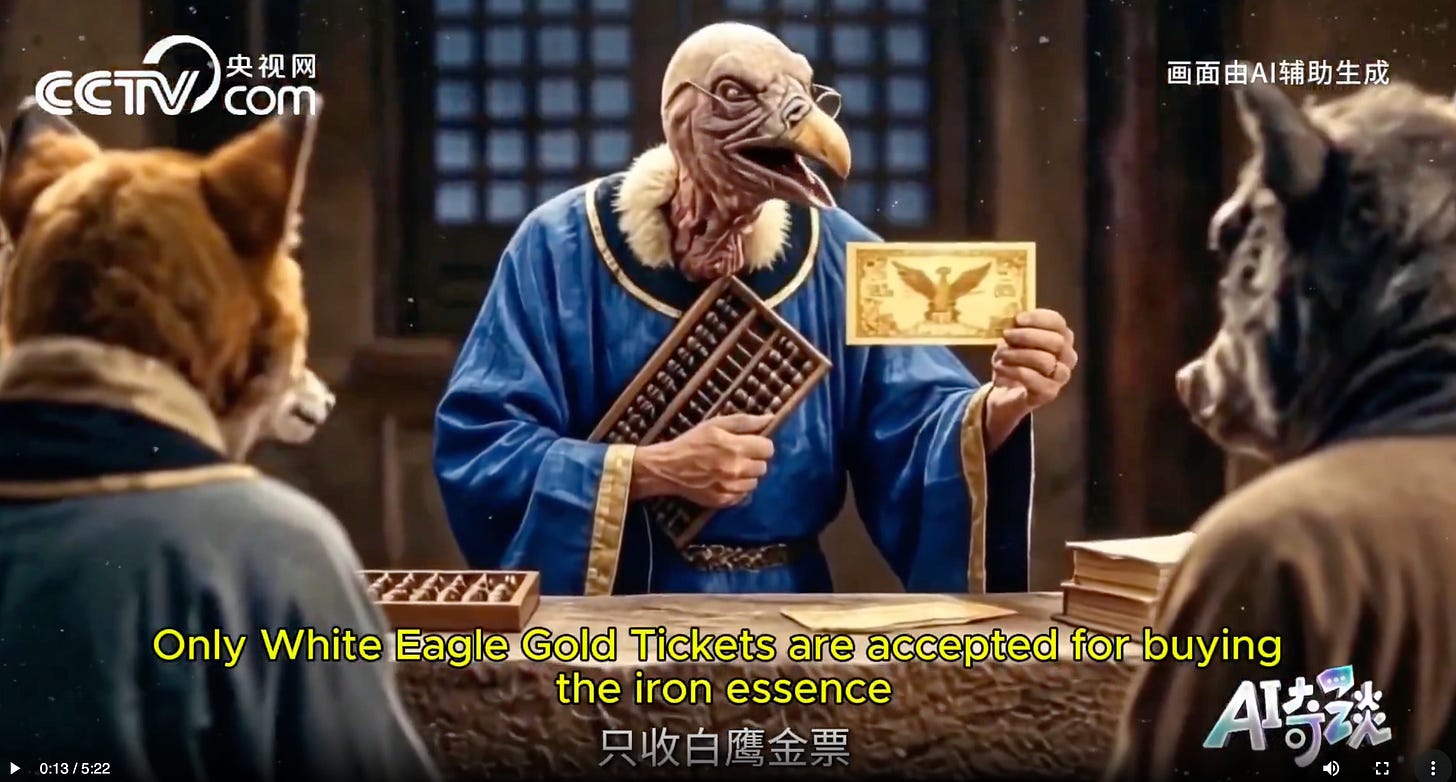

US adversaries have wanted that system to break for years, and the simplest, most entertaining articulation of their strategy came from this piece of alleged Chinese state media propaganda sourced from Angelica Oung:

This is one of the best AI generated films I’ve seen and it cleanly expresses China’s thesis: free trade and mutual economic benefit is a more powerful strategy than aggression and war, and they plan to use that strategy to fight US dollar hegemony.

The video is also a flex of Chinese AI video generation technology, Seedance 2.0 from Chinese tech company ByteDance.

02: How the Iran war could derail the AI boom

A Financial Times analysis explains how the Hormuz closure could stress-test the AI bubble.

The TLDR: Energy prices spike → chip manufacturing costs rise (the strait also constrains helium supplies critical for semiconductor production) → data center operating costs become untenable → sky high AI valuations start to wobble.

The geopolitical shock hits an already precarious financial structure. AI companies are certainly building world changing technology, but it’s unclear whether the financial bubble underlying the infrastructure buildout can survive a sustained energy crisis.

03: A heavy metal band frontman is my new favorite geopolitical strategist

I read and loved this piece of writing from a random twitter user before realizing that the twitter user was Philip Labonte, a US Marine and the front man of heavy metal band All That Remains. His analysis ties together the coup in Venezuela and the operation in Iran as a coordinated strategy to constrain China’s oil supply and limit its ability to invade Taiwan.

The United States remains heavily dependent on Taiwan for semiconductors, particularly advanced chips crucial for AI, defense, and technology. Limiting China’s oil supply does protect US interests, but at what cost?

04: Bioweapons and the adolescence of technology

A different kind of systems failure surfaced this week, and it connects to China and AI in uncomfortable ways.

The Los Angeles Times published a major feature on Tuesday about an illegal biolab discovered in Reedley, California. A code enforcement officer found thousands of vials of biological substances, many labeled in Mandarin or in code, containing at least 20 infectious agents including HIV, tuberculosis, malaria, and SARS-CoV-2. This discovery has propelled US lawmakers to push for stronger regulation of small labs, but some have critiqued the CDC for not doing enough.

As AI accelerates, our traditional understanding of bioweapons as tools of mass destruction may be outdated. AI makes it easier to engineer and control biological agents, something that Anthropic CEO Dario Amodei has written about extensively in his essay the Adolescence of Technology.

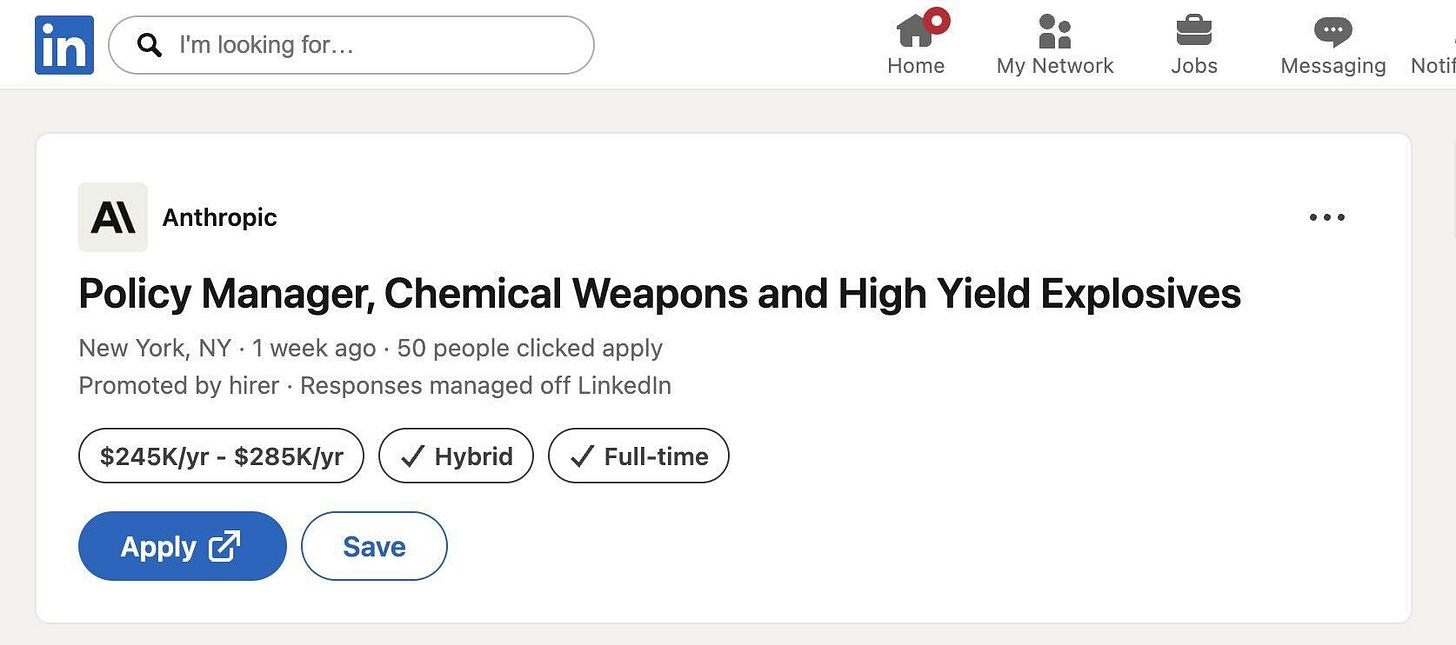

05: Wartime AI Safety

There’s tension between AI acceleration to keep up with US adversaries and AI safeguards to prevent total catastrophe. Along those lines, Anthropic made headlines this week for hiring a chemical weapons policy manager, which predictably generated outrage online from people who misunderstood the risk and intention.

Anthropic is building safeguards to prevent AI systems from providing instructions for synthesizing dangerous chemical and biological weapons, something that Elon Musk’s Grok has already done. Oops.

This ties in to the recent conflict between the US Dept of Defense and Anthropic. Anthropic CEO Dario Amodei correctly asserted that disagreeing with the government is the most American thing he can do. A federal judge in California agrees with him, justice Rita Lin blocked the Pentagon’s efforts to punish Anthropic by labeling it a supply chain risk, stating:

“Nothing in the governing statute supports the Orwellian notion that an American company may be branded a potential adversary and saboteur of the U.S. for expressing disagreement with the government.”

Speaking of Anti-American values, in new piece from the Wall Street Journal alleges that the rift between the founding teams of OpenAI and Anthropic were in part caused by OpenAI’s Greg Brockman’s openness to selling their technology to Russia and China, something against the values of Anthropic’s Dario Amodei.

06: A new Mythos

With the backdrop of government conflict, Anthropic accidentally leaked their newest and most capable AI model, Mythos (codename Cabypara, cute) which is apparently 2x more powerful than they’d anticipated. It made dramatically higher scores on reasoning, coding, and especially cybersecurity benchmarks. It represents a big leap in intelligence and autonomy.

Anthropic’s own docs flag Mythos for posing unprecedented cybersecurity risks. It could rapidly find zero-day vulnerabilities, orchestrate complex attacks, or automate vulnerability hunting far beyond what humans (or prior models) can do.

07: This is your brain on social media

On Tuesday, a California jury found Meta and Google’s YouTube liable for the depression and anxiety of a 20-year-old woman who became addicted to their platforms as a child. The jury awarded $6 million total: $3 million in compensatory damages and $3 million in punitive damages, with Meta responsible for 70%.

The dollar amount is negligible for companies of this scale, but the potential liability is much worse. The jury found that both companies acted with “malice, oppression, or fraud,” and this is a bellwether case tied to approximately 2,000 other pending lawsuits from parents, attorneys general, and school districts.

Some see this as a good thing, it’s pretty clear most of us have become addicted to the social media dopamine machine, but others warn that lawmakers will use this to gut Section 230, the law that prevents big tech from being held accountable for the content on their platforms, and use it to increase censorship and surveillance.

08: Meta can read your mind

The same week as the verdict, Meta dropped TRIBE v2, a foundation model trained on 500+ hours of fMRI data that predicts how your brain responds to any visual or auditory content. No scanner needed. Works on people it’s never seen. They open-sourced everything with the stated goal of neuroscience research.

A self-described former Meta engineer laid out the unstated goal: Meta already has years of Reels data on what holds attention and provokes sharing. TRIBE v2 gives them the mapping of emotional activation and suppression of critical reasoning. Meta's model can predict whether a piece of content will suppress your ability to think critically before you even see it. Upload the content, control the minds?

Meta clearly knows how its technology impacts our minds. They were getting in trouble for conducting undisclosed psychological experiments on their users over a decade ago. It’s yet to be seen how their legal liability for designing addictive and manipulative technology will play out.

09: The frontiers of the mind and body

People in the tech industry have begun gravitating toward somatic work: bodywork, breathwork, nervous system regulation. Some believe this is increasing specifically as AI usage increases. The more time spent in disembodied cognition, the more the body starts demanding attention. Some theorize that consciousness is more connected to the body and nervous system than previously thought. If the machines seem like they’re becoming conscious, maybe embodiment is the differentiator.

Along these lines, my favorite podcast episode this week was The Telepathy Tapes Season 2 premiere: “Near Death Experiences and the Continuum of Consciousness” featuring neurosurgeon Eben Alexander, Martha Beck, and scholar Gregory Shushan. It traces NDEs from ancient Sumerian tablets through cutting edge neuroscience, and it’s one of the more rigorous treatments of non-local consciousness I’ve encountered.

A growing number of serious, technically literate people are taking non-materialist models of consciousness seriously. Not as a rejection of material science, but as an expansion of it.

Til next week, thanks for reading.